Tether’s second reserve asset is intelligence

Tether’s new QVAC project begins with an unusual phrase for a stablecoin company. The company describes “QVAC Psy” as a family of foundational models “rooted in the principles of Psychohistory.”

The reference to psychohistory belongs to Isaac Asimov’s Foundation universe, where Hari Seldon uses mathematics, statistics, and social dynamics to forecast the behavior of very large populations and shorten the dark age after the Galactic Empire’s collapse.

The Encyclopedia of Science Fiction describes Asimovian psychohistory as an “Imaginary Science,” while Seldon’s work is a plan that predicts future events and preserves knowledge through systemic breakdown.

Tether’s wording functions as a mission statement wrapped in science-fiction language.

The company built the largest stablecoin in crypto by turning reserves, liquidity, and distribution into a monetary infrastructure. QVAC applies the same instinct to intelligence.

Tether’s first reserve asset remains the dollar-like liability at the center of USDt. Its second reserve asset is becoming compute, models, datasets, and the ability to run AI outside centralized clouds.

From dollar reserves to intelligence reserves

Tether’s expansion into AI follows the mechanics of its core business. USDt converts demand for offshore dollars into a reserve stack dominated by short-duration sovereign instruments.

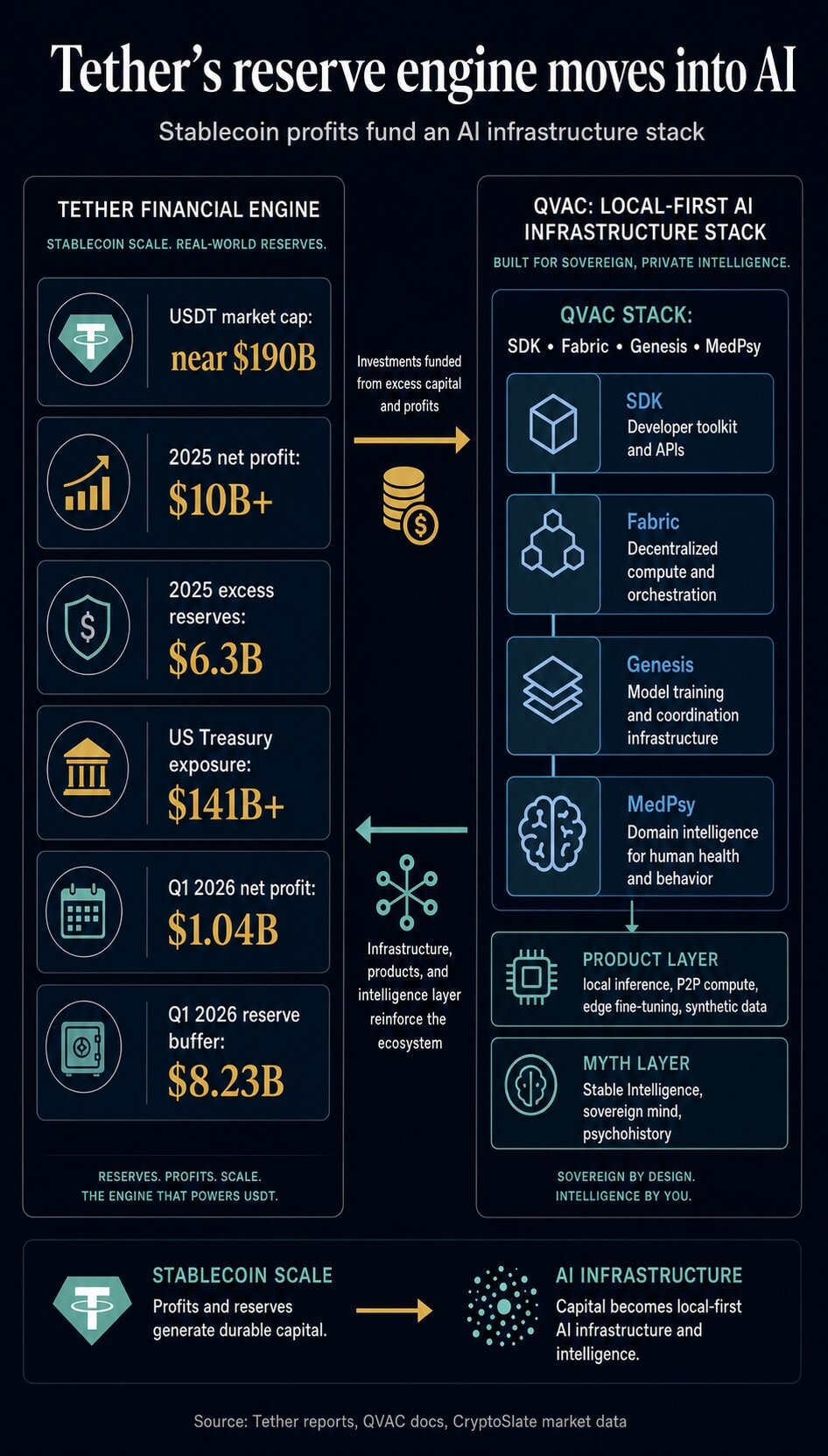

In its Q1 2026 attestation update, Tether reported $1.04 billion in net profit, an $8.23 billion reserve buffer, roughly $183 billion in token-related liabilities, and about $141 billion in direct and indirect exposure to U.S. Treasury bills. That reserve base gives

Tether recurring income, balance-sheet capacity, and room to fund long-duration infrastructure bets from operating strength.

CryptoSlate has already tracked how this reserve engine can turn stablecoin scale into strategic allocation. In January, Tether’s 8,888 BTC purchase showed how interest income and operating profits can translate into recurring Bitcoin demand. QVAC pushes the same logic into a different asset class.

Alongside Bitcoin, gold, startups, energy, mining, communications, and other infrastructure positions, Tether is allocating into intelligence itself. The move extends the company’s self-image from issuer of private dollar liquidity to builder of private digital infrastructure.

The “psychohistory” language fits that direction because Tether is framing AI as a civilizational layer rather than a software vertical. QVAC’s public materials describe an “Infinite Stable Intelligence Platform,” a local-first system for the “decentralized mind,” and an answer to centralized AI.

The QVAC vision page argues that routing every thought through centralized servers is too slow, fragile, and controlled, and then places QVAC as an edge-native foundation for the intelligence that users possess.

That framing mirrors Tether’s broader stablecoin pitch. Money should move without permission. Data should stay with the user. Intelligence should run where the user is.

The most serious claim, however, sits underneath the Asimov reference. Tether is saying that AI becomes more durable when it behaves like resilient infrastructure.

A cloud model can be more capable, yet it carries provider risk, pricing risk, policy risk, latency risk, and data-routing risk.

A local model gives up part of the frontier capability curve in exchange for ownership, privacy, and continuity.

The trade is familiar in crypto. Self-custody is less convenient than an exchange until the exchange fails. Local AI is less convenient than a hosted frontier model until the network drops, the API changes, the account closes, or the data cannot leave the device.

QVAC is an edge stack built around a different race

QVAC’s key distinction is architectural. OpenAI, Anthropic, Google DeepMind, and xAI compete across maximum general capability, coding, multimodality, long-context reasoning, agentic behavior, and enterprise cloud distribution.

QVAC aims at a different axis: deployability, privacy, latency, composability, and survival outside a single provider.

The QVAC welcome documentation defines the project as an open-source, cross-platform ecosystem for local-first, peer-to-peer AI applications across Linux, macOS, Windows, Android, and iOS. The same documentation says users can run LLMs, perform speech recognition and retrieval-augmented generation, and handle other AI tasks locally, or delegate inference to peers via built-in P2P capabilities.

That gives QVAC a different benchmark from the frontier labs. Frontier AI optimizes for the strongest general model available through a centralized service. QVAC optimizes for where inference happens, who controls the runtime, what data leaves the device, and whether an application can continue operating when centralized services become unavailable.

Tether’s April 2026 SDK launch describes a unified development kit that lets developers build, run, and fine-tune AI on any device, with applications designed to run unchanged across iOS, Android, Windows, macOS, and Linux.

It also says that the QVAC SDK uses a unified abstraction layer over local inference engines, including QVAC Fabric, a fork of llama.cpp, plus integrations with whisper.cpp, Parakeet, and Bergamot for speech and translation.

That is closer to an operating layer than a single model release. The open-source AI ecosystem already has powerful pieces: Llama, Qwen, Mistral, Gemma, DeepSeek, Hugging Face, llama.cpp, Ollama, vLLM, LM Studio, and a long tail of local inference projects.

QVAC’s bet is that developers need a coherent edge framework that joins model loading, inference, speech, OCR, translation, image generation, RAG, P2P model distribution, delegated inference, and local fine-tuning through one interface.

QVAC is positioning itself as a distribution layer for intelligence, assuming that good-enough local models will continue to improve.

QVAC Fabric is the technical center of that claim. Tether says Fabric supports fine-tuning across modern consumer hardware through Vulkan and Metal backends, including Android devices with Qualcomm Adreno or ARM Mali GPUs, Apple Silicon devices, and standard Windows or Linux setups with AMD, Intel, or NVIDIA hardware.

It also describes dynamic tiling for mobile GPU memory limits and a LoRA workflow with GPU acceleration and masked-loss instruction tuning.

If that workflow holds up in external developer use, the distinction from typical open-source model releases becomes material. The model weights are one layer. Local adaptation becomes the next layer.

MedPsy is QVAC’s first hard test

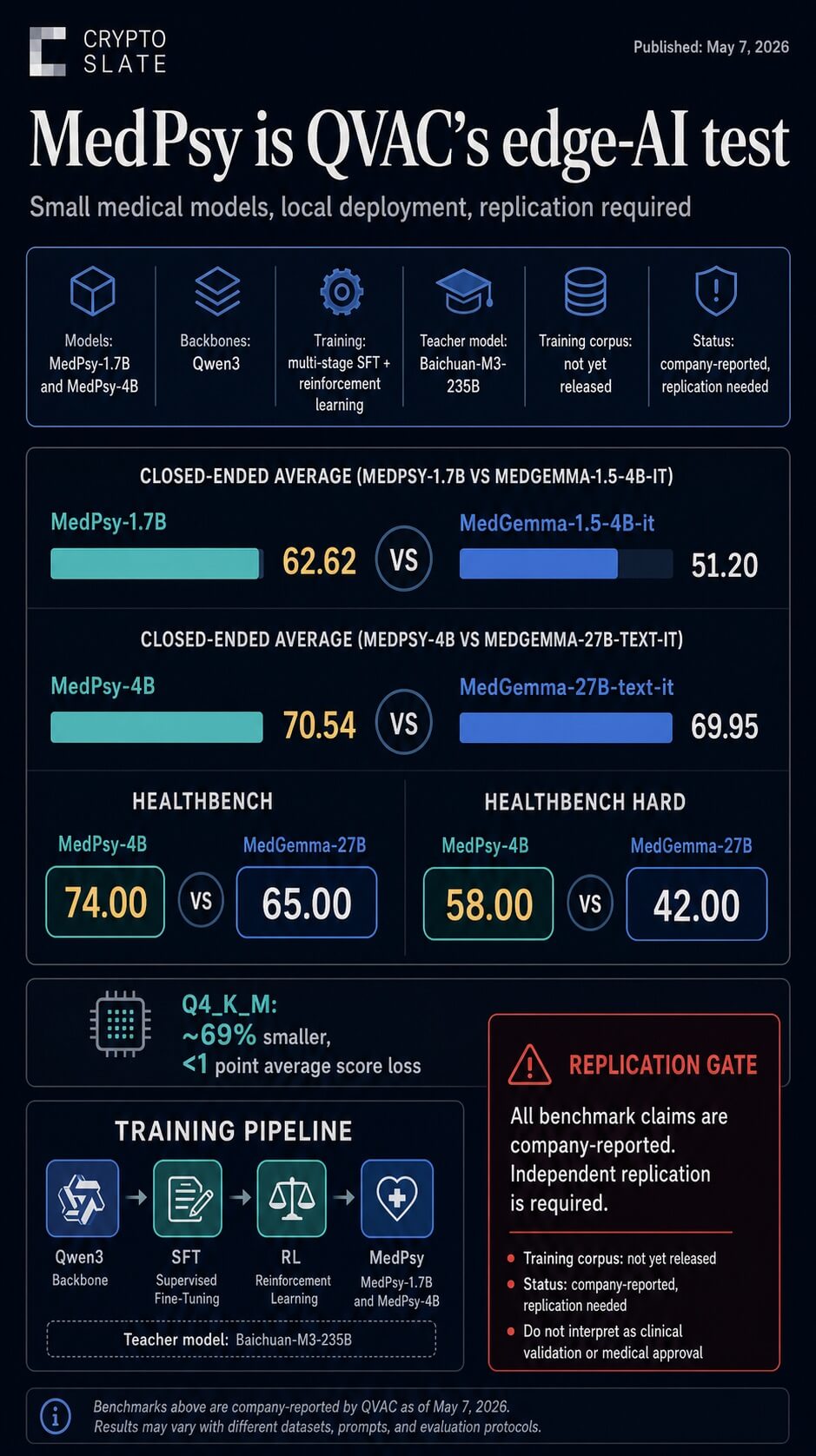

MedPsy gives QVAC its first concrete model-level proof point. The Hugging Face technical report, published May 7, presents QVAC MedPsy as a family of text-only medical and healthcare language models built for edge deployment at 1.7 billion and 4 billion parameters.

The claim is ambitious: smaller models, trained through a tightly controlled medical post-training pipeline, can outperform larger medical baselines while remaining practical for laptops, high-end mobile devices, and smartphone-class applications.

QVAC says MedPsy-1.7B scores 62.62 across seven closed-ended medical benchmarks, above Google’s MedGemma-1.5-4B-it at 51.20, despite being less than half its size.

It also says MedPsy-4B scores 70.54, slightly above MedGemma-27B-text-it at 69.95, while being nearly seven times smaller.

On HealthBench and HealthBench Hard, QVAC reports a wider gap, with MedPsy-4B scoring 74.00 and 58.00 versus MedGemma-27B-text-it at 65.00 and 42.67 under the CompassJudger evaluation shown in the report.

Those results, if independently reproduced, would support the core QVAC thesis: domain-specific, edge-scale models can challenge much larger systems in constrained, high-value categories.

The training recipe also shows how QVAC plans to compete. The report says MedPsy uses Qwen3 backbones and then applies multi-stage supervised fine-tuning and reinforcement learning to medical QA tasks.

It generated more than 30 million synthetic rows during experimentation, used a two-stage curriculum, and selected Baichuan-M3-235B as the single teacher model for long-form reasoning supervision. QVAC also states that the training corpus has not yet been released. That caveat is central.

The strongest public benchmark claims still come from QVAC itself, and the training data needed to fully interrogate contamination, coverage, prompt construction, and teacher influence remains unavailable.

The edge angle becomes sharper in quantization. QVAC says GGUF variants are published for llama.cpp and QVAC SDK, with Q4_K_M reducing file size by 69% while losing less than one average score point for both MedPsy sizes.

The report recommends Q4_K_M with imatrix calibration as the size-and-quality trade-off: 2.72 GB for the 4B model and 1.28 GB for the 1.7B model. The QVAC models FAQ also warns that MedPsy is text-only, English-only, unsuitable for emergencies, vulnerable to hallucination, and dependent on developers preserving privacy across the full application architecture. That gives the technical center its proper shape.

MedPsy is promising because medicine has strong reasons to prefer local inference. It remains unproven until external researchers reproduce the benchmark ladder and test it under real clinical workflow constraints.

The unresolved fight is convenience versus control

The local-versus-cloud AI debate is usually framed as a choice between privacy and performance. QVAC reframes it as convenience against control.

Cloud AI wins on ease. The user opens an app, sends a prompt, receives an answer, and avoids the operational burden of model weights, device memory, quantization, embeddings, or runtime compatibility.

The provider absorbs the complexity. That convenience is powerful, and it explains why centralized AI platforms have scaled so quickly. The user gets frontier capability with minimal setup.

QVAC asks developers and users to accept more responsibility in exchange for a different security model. The reward is local execution, offline operation, reduced data exposure, lower dependency on API access, and a path toward peer-to-peer inference and model distribution.

Tether’s SDK launch says QVAC-powered apps can keep working in low-connectivity environments and that “if the internet goes down, the AI keeps working.” Its 2025 QVAC announcement went further, describing AI agents running directly on local devices, peer-to-peer networking for device-to-device collaboration, and WDK integration that would allow AI agents to transact in Bitcoin and USDt.

That is the full Tether thesis: money, computation, and autonomous agents should share the same sovereign design pattern.

The decentralization claim isn’t quite as straightforward as some would like. QVAC is meaningfully decentralized at the inference layer when a user can download a model, run it locally, and keep sensitive data on device.

It is more decentralized than a hosted API because the provider no longer sits inside every prompt.

It also adds peer-to-peer primitives through the Holepunch stack, including delegated inference and decentralized model distribution, according to Tether’s SDK materials. Those are substantive design choices.

Governance is a separate layer. QVAC is funded, named, coordinated, and promoted by Tether. The flagship apps, model family, SDK roadmap, and “Stable Intelligence” language all originate from a single corporate sponsor.

That structure coexists with the local-first value proposition. It narrows the decentralization claim to where the evidence is strongest.

QVAC decentralizes where inference can happen. The broader ecosystem still needs evidence of distributed control over default registries, release channels, safety conventions, model inclusion, and long-term governance.

Replication is the next threshold

QVAC’s credibility now sits on replication. If MedPsy’s results reproduce outside QVAC’s own evaluation harness, Tether will have a credible first example of its intelligence-reserve thesis: small, open, locally deployable models that can compete with larger cloud-oriented systems in a sensitive domain.

If independent testing narrows or reverses the benchmark gap, QVAC still has an infrastructure argument, while its model claim carries less weight. The broader fight then returns to the oldest trade in technology: convenience concentrates power, while control imposes work.

That is where the Asimov pitch becomes useful. Psychohistory in Foundation was concerned with large systems under stress. Tether’s version focuses on infrastructure under centralization. The language is grand, and the technical proof remains early, but the direction is coherent.

Tether is leveraging the cash flows of the world’s largest stablecoin to build an AI stack focused on local execution, peer networks, open tooling, and edge-scale models. It is extending the stablecoin premise from money to intelligence.

The question is no longer whether a stablecoin company can afford to build AI. Tether clearly can.

The question is whether QVAC can produce models and infrastructure strong enough to make users accept the friction of local control.

MedPsy is the first measurable threshold. Independent replication will determine whether QVAC’s psychohistory language remains a metaphor or begins to resemble the early operating logic of a serious edge-AI stack.