Google’s DeepMind and Google Cloud revealed a new tool that will help it to better identify when AI-generated images are being utilized, according to an August 29 blog post.

SynthID, which is currently in beta, is aimed at curbing the spread of misinformation by adding an invisible, permanent watermark to images to identify them as computer-generated. It is currently available to a limited number of Vertex AI customers who are using Imagen, one of Google’s text-to-image generators.

This invisible watermark is embedded directly into the pixels of an image created by Imagen and remains intact even if the image undergoes modifications such as filters or color alterations.

Beyond just adding watermarks to images, SynthID employs a second approach where it can assess the likelihood of an image being created by Imagen.

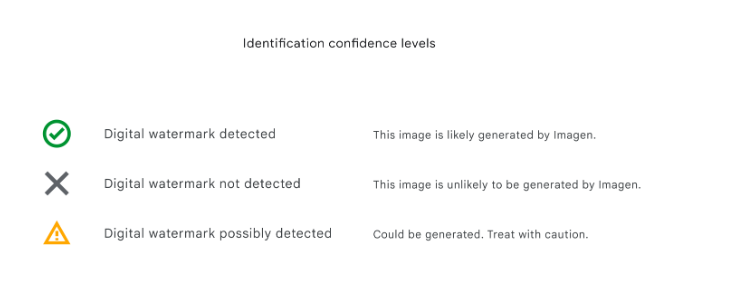

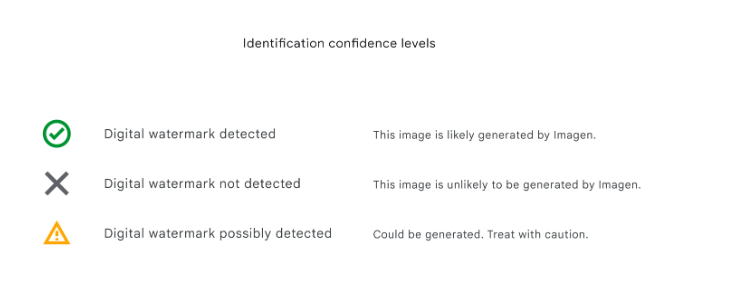

The AI tool provides three “confidence” levels for interpreting the results of digital watermark identification:

- “Detected” – the image is likely generated by Imagen

- “Not Detected” – the image is unlikely to be generated by Imagen

- “Possibly detected” – the image could be generated by Imagen. Treat with caution.

In the blog post, Google mentioned that while the technology “isn’t perfect,” its internal tool testing has shown accuracy against common image manipulations.

Due to advancements in deepfake technology, tech companies are actively seeking ways to identify and flag manipulated content, especially when that content operates to disrupt the social norm and create panic – such as the fake image of the Pentagon being bombed.

The EU, of course, is already working to implement technology through its EU Code of Practice on Disinformation that can recognize and label this type of content for users spanning Google, Meta, Microsoft, TikTok, and other social media platforms. The Code is the first self-regulatory piece of legislation intended to motivate companies to collaborate on solutions to combating misinformation. When it first was introduced in 2018, 21 companies had already agreed to commit to this Code.

While Google has taken its unique approach to addressing the challenge, a consortium called the Coalition for Content Provenance and Authenticity (C2PA), backed by Adobe, has been a leader in digital watermark efforts. Google previously introduced the “About this image” tool to offer users information about the origins of images found on its platform.

SynthID is just another next-gen method by which we are able to identify digital content, acting as a type of “upgrade” to how we identify a piece of content through its metadata. Since SynthID’s invisible watermark is embedded into an image’s pixels, it is compatible with these other image identification methods that are based on metadata and is still detectable even when that metadata is lost.

However, with the rapid advancement of AI technology, it remains uncertain whether technical solutions like SynthID will be completely effective in addressing the growing challenge of misinformation.

Editor’s note: This article was written by an nft now staff member in collaboration with OpenAI’s GPT-4.